The Uptime Engineer

👋 Hi, I am Yoshik

You'll learn:

- a mental model for how Docker executes a multi-stage build under the hood

- the cache invalidation rules that make or break your CI build time

- the exact pattern for keeping dev, test, and prod images from a single Dockerfile

🔥Tool Spotlight

Dive - layer-by-layer image explorer that shows you exactly what each Docker layer adds, modifies, and wastes, with an efficiency score.

dive your-image:latest📚 Worth Your Time

Multi-stage builds - Docker official docs, the authoritative reference on stage syntax, COPY --from, named stages, and target builds.

https://docs.docker.com/build/building/multi-stage/

13 ways to optimize Docker builds and practical list covering cache strategy, layer ordering, and multi-stage patterns with real Dockerfile examples.

https://overcast.blog/13-ways-to-optimize-docker-builds-ba1151b256f3

You've must have experienced or questioned why your docker image is this big.

Simple Go or Node.js app. Clean codebase. Docker image comes out at 1.3GB.

You switch to alpine. Get it down to 900MB. Ship it.

That's not a fix. That's a smaller version of the same problem.

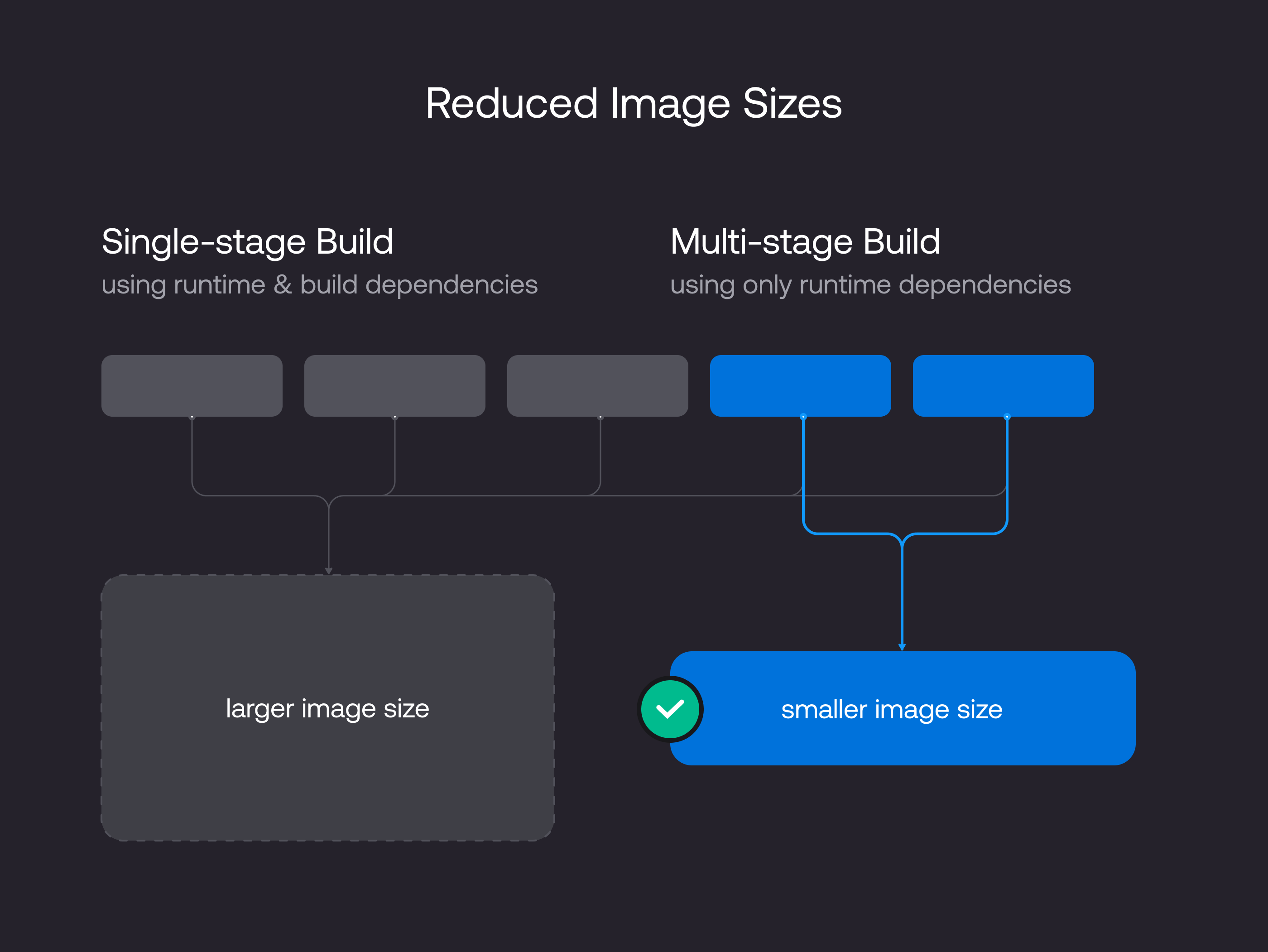

The real issue: a single-stage Dockerfile forces your production container to carry everything that was ever needed to build your binary. The compiler. The test runner. devDependencies. Intermediate object files.

None of that handles a single production request. All of it ships, gets pulled, and sits in your attack surface.

Multi-stage builds fix this at the root. But to use them correctly, you need to understand what Docker is actually doing not just the syntax.

Layers are additive and permanent

Every RUN, COPY, and ADD creates an immutable filesystem diff. Docker stacks these diffs in order and presents them as a single unified filesystem at runtime.

The trap: even if you delete something in a later layer, the bytes from earlier layers are still physically in the image.

Layer 1: base OS → 80MB

Layer 2: apt-get install → 220MB

Layer 3: npm install → 400MB

Layer 4: npm run build → 50MB

Layer 5: copy binary → 10MB

─────────────────────────────────

Total: → 760MBrm -rf node_modules in a later RUN doesn't remove layer 3. It adds a new layer that marks those files as deleted. The 400MB is still there.

This is why "clean up in the same RUN instruction" exists as advice. It works. But it's a workaround for a single-stage problem, not a solution to it.

What multi-stage builds actually do

A multi-stage build is not a cleanup trick. It is a pipeline with isolated filesystem scopes.

Each FROM starts a new stage with a clean slate. No previous layers carry over unless you explicitly copy artifacts between stages.

# Stage 1: builder

FROM node:20 AS builder

WORKDIR /app

COPY package*.json ./

RUN npm ci

COPY . .

RUN npm run build

# Stage 2: runtime

FROM node:20-alpine AS runtime

WORKDIR /app

COPY --from=builder /app/dist ./dist

COPY --from=builder /app/node_modules ./node_modules

CMD ["node", "dist/index.js"]The runtime stage starts from a clean alpine image. It has no knowledge of the builder stage's layers. It only receives what you explicitly COPY --from=builder.

The result:

builder: node:20 + devDeps + source + compiler cache → ~1.1GB

runtime: node:20-alpine + dist/ + prod modules only → ~120MBThe builder is a throwaway workspace. It exists to produce artifacts. The runtime stage is what actually ships.

How COPY --from works internally

COPY --from=builder is not a layer merge. It is a selective file copy between two independent layer graphs.

When Docker processes it:

Resolves the named stage to its final filesystem state

Reads only the specified path from that filesystem

Writes those bytes as a new layer in the current stage

The source stage's layers are not included or referenced. Just the files at that path, at the moment the source stage finished. That's how you copy a 5MB Go binary out of a 1.2GB builder and arrive at a 50MB final image.

Layer cache: where it breaks

Cache and parallelism are the engine. Understanding cache invalidation is what makes the engine fast.

Docker caches a layer if the instruction is identical, the referenced files haven't changed, and every layer before it was also a cache hit. One miss invalidates everything after it.

Wrong order:

COPY . . # cache miss on every commit

RUN npm ci # forced reinstall every time

Right order:

COPY package*.json ./ # only changes when deps change

RUN npm ci # cache hit on every code-only commit

COPY . .

RUN npm run build

package.json changes once a week. Source files change on every commit.

A CI pipeline reinstalling 400MB of npm packages on every push is not a performance problem. It is a layer ordering problem.

BuildKit runs independent stages in parallel

These are the three shapes the engine above powers in production.

If two stages don't depend on each other, BuildKit executes them simultaneously by resolving a dependency DAG. Your total build time approaches the slowest stage, not the sum of all stages.

FROM golang:1.22 AS go-builder

RUN go build -o /app/server .

FROM node:20 AS frontend-builder

RUN npm run build

FROM debian:bookworm-slim AS runtime

COPY --from=go-builder /app/server /usr/local/bin/

COPY --from=frontend-builder /app/dist /var/www/go-builder and frontend-builder are independent. BuildKit runs both at the same time. Three two-minute compile steps: sequential costs six minutes, parallel costs two.

Enable BuildKit if it isn't default yet:

export DOCKER_BUILDKIT=1

docker build .Three patterns that show up in production

Pattern 1: Compiler out, binary in

Your build tool produces one artifact. Production doesn't need the compiler.

FROM scratch is the logical endpoint of this pattern. Zero bytes. No OS. Just your binary.

FROM golang:1.22-alpine AS builder

WORKDIR /app

COPY go.mod go.sum ./

RUN go mod download

COPY . .

RUN CGO_ENABLED=0 go build -o server .

FROM scratch AS runtime

COPY --from=builder /app/server /server

ENTRYPOINT ["/server"]CGO_ENABLED=0 produces a statically compiled binary with no OS library dependencies. Final image is literally the size of the binary. For a production Go service, this isn't clever, it's standard.

Pattern 2: Dev, test, and prod from one Dockerfile

Most teams maintain three separate Dockerfiles. One multi-stage file handles all three with --target.

FROM node:20 AS base

WORKDIR /app

COPY package*.json ./

RUN npm ci

FROM base AS development

COPY . .

CMD ["npm", "run", "dev"]

FROM base AS test

COPY . .

RUN npm test

FROM base AS production

RUN npm ci --omit=dev

COPY . .

RUN npm run build

CMD ["node", "dist/index.js"]docker build --target test .

docker build --target production .One Dockerfile. Three environments. No duplication. No drift between what you test and what you ship.

Pattern 3: Secrets that must not survive the build

Some builds need credentials: a private registry token, an SSH key, a pip index URL. Here's the risk before showing you the broken code:

Anyone with image pull access can extract credentials baked into a layer, even if you deleted them afterward. The deletion is a new layer. The original write is permanent.

The broken approach:

ARG NPM_TOKEN

RUN echo "//registry.npmjs.org/:_authToken=${NPM_TOKEN}" > ~/.npmrc

RUN npm ci

# deleting .npmrc here does nothing - the token is already in a layer aboveThe right approach with BuildKit secrets:

RUN --mount=type=secret,id=npmrc,target=/root/.npmrc npm cidocker build --secret id=npmrc,src=.npmrc .The secret is mounted at build time only. Never written to a layer. Doesn't appear in docker history. Doesn't exist in the final image.

The mental model to keep

Think of your Dockerfile as a factory floor.

The builder stage is the workshop. Every tool, every mess, every intermediate scrap lives here. The mess is supposed to stay in the workshop.

The runtime stage is the shipping container. Only the finished product goes in. No tools, no scrap, no instruction manuals for machines the customer will never see.

Every layer in your runtime image that doesn't serve production traffic is waste: wasted pull time, wasted storage, wasted attack surface.

Run docker history --no-trunc your-image:latest right now.

If you see compiler layers, test dependencies, or dev tooling in your runtime image, you don't have an optimization problem. You have a single-stage Dockerfile problem.

And now you know exactly how to fix it.

Join 1,000+ engineers learning DevOps the hard way

Every week, I share:

How I'd approach problems differently (real projects, real mistakes)

Career moves that actually work (not LinkedIn motivational posts)

Technical deep-dives that change how you think about infrastructure

No fluff. No roadmaps. Just what works when you're building real systems.

👋 Find me on Twitter | Linkedin | Connect 1:1

Thank you for supporting this newsletter.

Y’all are the best.