The Uptime Engineer

👋 Hi, I am Yoshik

You'll learn:

- Why durability lives or dies on repair speed, not replication count

- How S3 catches corruption before it's even stored

- How to protect data from your own team deleting the wrong thing

🔥Tool Spotlight

S3 Batch Operations: Compute Checksum. Verify integrity across billions of objects without downloading them. Generates a full audit report. Good for fixity checks, compliance, and "is this archive silently rotting?"

You've probably heard someone say "S3 has 11 nines, it's basically indestructible."

They're not wrong. But they're quoting the wrong number.

11 nines is durability: the probability that an object you stored today still exists tomorrow. It has nothing to do with whether S3 is reachable. That's availability - a different SLA, backed by different mechanisms.

S3 Standard is designed for 99.999999999% durability and 99.99% availability. Two promises. Two failure modes. Two entirely different engineering responses when something goes wrong.

Get these confused in a postmortem and you will spend four hours solving the wrong problem.

Durability has five failure gates.

A single disk fails. Your only copy disappears.

Redundancy drops. Nobody notices.

Repair is too slow. A second failure hits before the first is fixed.

Bits flip silently on a live disk. Data exists but is wrong.

A human or an app deletes or overwrites the right object at exactly the wrong time.

S3's architecture is a direct response to each of these. One mechanism per gate.

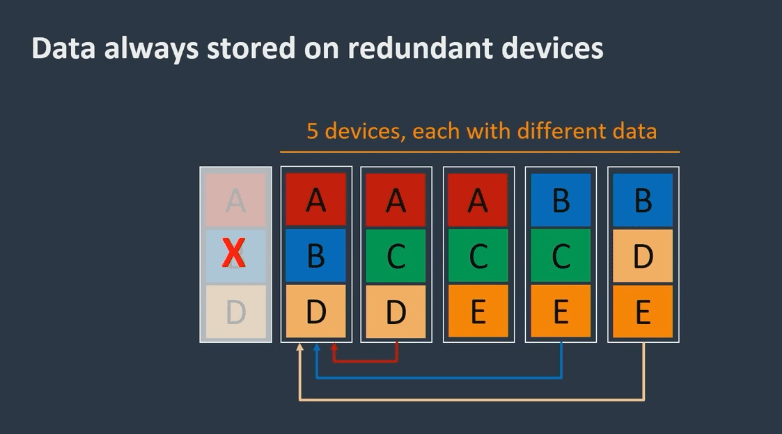

Gate 1: spread copies, not just disks.

S3 Standard stores every object across multiple devices in at least three Availability Zones.

Not three racks. Not three servers. Three physically separated facilities with independent power, cooling, and networking.

Replication within a single AZ is not redundancy. It's a false sense of safety. If your three copies share a power circuit or a network uplink, they can fail together. The unit of redundancy must be the unit of failure.

Disk fails: still safe. Rack fails: still safe. Full AZ goes dark: still safe.

Gate 2 and 3: replication is a state. Repair is the operation.

This is the part most storage conversations miss entirely.

Replication is not a permanent condition. It's a snapshot of redundancy that hardware failures continuously erode. Every time a disk dies, you are temporarily under-replicated. If a second failure hits while you're in that window, you lose data.

S3 is explicitly designed to detect and repair lost redundancy fast. That word "fast" is doing real work in their documentation.

The practical metric this implies: repair throughput versus failure rate. If drives are dying faster than your repair pipeline can rebuild copies, durability degrades regardless of your starting replication factor.

If you run Ceph, MinIO, or any replicated storage, track under-replicated object counts and repair queue depth. That's your actual durability signal. Cluster health being green tells you almost nothing.

Gate 4: data can be wrong without being missing.

All your replicas exist. The bytes are there. But somewhere between write and read, bits flipped.

This is bit rot: sectors go bad on a technically healthy disk, or network hardware corrupts a payload in transit. The object appears to exist. It just returns garbage.

S3 runs regular integrity scans using checksums against stored objects to catch exactly this. A missing object is loud and obvious. Corrupted data is silent until a customer hits it.

Checksums convert silent corruption into a detectable event.

Gate 4 continued: now the integrity check starts at upload.

For years, integrity verification happened after data arrived at S3. That still left a window: faulty network hardware between your client and AWS, or a bug in your SDK, could corrupt a payload that S3 never knew was wrong.

AWS closed this gap with default end-to-end integrity protections for new objects, enabled automatically with updated SDKs and CLI.

The model is simple:

client computes checksum → sends it with the payload

S3 independently computes checksum → compares both

mismatch: upload rejected, no "success" response returned

This is the correct contract for any system that accepts critical data. Don't acknowledge success until you've validated what you received.

If you run your own ingestion pipelines, copy this pattern verbatim. Compute a CRC32C or SHA-256 at the client. Verify at the server boundary. Reject on mismatch. Never trust the network to be clean.

Gate 5: the blast radius you don't model.

Disks fail at predictable rates. Humans are harder to model.

The most common data-loss event in real organizations is not hardware. It's an accidental delete, a bad migration script, an app bug that overwrites records at scale.

S3 handles this with two mechanisms:

Versioning preserves every version of every object. Delete an object with versioning enabled and S3 creates a delete marker instead of removing the data. The previous version still exists and is recoverable.

Object Lock goes further: it prevents deletion or overwriting of an object for a specified retention period, regardless of who asks, including root credentials.

If your current disaster recovery plan depends on "be careful when running delete commands," you are one bad day away from a very expensive lesson.

The practical checklist.

If you want S3-level durability thinking applied to your own stack, your system needs:

Failure domain isolation: replicas across independent power, network, and physical location

Repair throughput monitoring: track how fast you recover lost redundancy, not just whether replication is configured

Checksums at the boundary: compute and verify at both ends of every write path, at rest and in transit

Human error guardrails: versioning semantics, retention policies, and a tested restore path

A written durability contract: what you guarantee (and what you don't) so your team speaks the same language in an incident

S3's 11 nines is not a hardware miracle. It is what happens when you treat under-replication, corruption, and human mistakes as routine events and build detection and repair loops around all three.

The real question isn't "how durable is our storage?"

It's "how fast do we detect and recover when durability breaks?"

Thanks for reading. You are the best.

Join 1,000+ engineers learning DevOps the hard way

Every week, I share:

How I'd approach problems differently (real projects, real mistakes)

Career moves that actually work (not LinkedIn motivational posts)

Technical deep-dives that change how you think about infrastructure

No fluff. No roadmaps. Just what works when you're building real systems.

👋 Find me on Twitter | Linkedin | Connect 1:1

Thank you for supporting this newsletter.

Y’all are the best.