The Uptime Engineer

👋 Hi, I am Yoshik

This week, you'll learn why naive hashing remaps every key when you add a node, how the fixed hash ring keeps most keys stable during changes, and why virtual nodes are non-negotiable for even distribution at scale.

🔥Tool Spotlight

HashiCorp Nomad - production scheduler that uses consistent hashing natively for job placement across heterogeneous node pools, with built-in ring visualization and rebalance commands.

📚 Worth Your Time

#14 Uptime Sync: GitOps, AI Agents, and Kubernetes at Scale

Anthropic's secret project, the npm supply chain attack you should've caught, and the Kubernetes cost cut hiding in your instance type

Most engineers know the term consistent hashing. They have seen it in system design interviews, in Cassandra docs, in Redis Cluster architecture diagrams. They nod when it comes up. But ask them why it was invented - not what it does, but what specific problem forced someone to sit down and design it - and most go quiet.

That gap is what this we will close today.

1. The Problem Before the Solution

1.1 What happens when you add or remove a server

1.2 Why naive hashing breaks everything

- The modulo approach and why it fails at scale

- What a cache stampede looks like in production

1.3 The cost of remapping - real numbers

2. What Consistent Hashing Actually Is

2.1 The hash ring - what it is and how to visualise it

2.2 How nodes sit on the ring

2.3 How a key finds its node

2.4 What changes when a node is added

2.5 What changes when a node is removed

2.6 Why only a fraction of keys move - the math in plain terms

3. Virtual Nodes

3.1 The problem with a basic ring - uneven distribution

3.2 What virtual nodes are

3.3 How vnodes fix the distribution problem

3.4 The trade-off - more complexity, better balance

3.5 How many vnodes is enough - what production systems use

4. Replication on the Ring

4.1 Why one copy of data is not enough

4.2 How replication works with consistent hashing

4.3 Replication factor - what it means and how to pick it

4.4 What happens during a node failure with replication

5. The Trade-offs You Need to Know

5.1 Consistency vs availability on the ring

5.2 Hotspots - when the ring is not as balanced as you think

5.3 Operational complexity at scale

5.4 When consistent hashing is overkillThe Problem Before the Solution

Naive Hashing

Before consistent hashing existed, the obvious way to distribute data across multiple servers was simple modulo hashing. You take a key - say a user ID or a cache key - run it through a hash function, and do hash(key) % N where N is the number of servers. The result tells you which server holds that key. Server 0, server 1, server 2. Clean, simple, predictable.

This works perfectly until N changes.

Say you have 4 servers and your hash function sends key "user_4291" to server 2. Everything is running fine. Then traffic doubles and your team adds a fifth server. N is now 5. Run the same key through the formula and hash("user_4291") % 5 gives you a completely different server. Not just for this one key - for the majority of your keys. When N changes from 4 to 5, roughly 80% of all keys map to a different server than they did before.

In a database, that means data is on the wrong node and queries fail or return nothing until the data is moved. In a cache, it is worse. The cache does not know data moved - it just gets a miss. The request falls through to the database. Now every single one of those remapped keys is a cache miss hitting your database simultaneously. This is a cache stampede, and it can take down a production system that was handling load perfectly fine five minutes ago.

The Cost of Remapping

The numbers make this concrete. Imagine you are running Memcached with 10 nodes caching 50 million keys. Your cache hit rate is 95% - your database handles 5% of requests, well within capacity. You add one node to handle growing traffic. With naive hashing, hash(key) % 10 becomes hash(key) % 11. Roughly 9 out of every 10 keys now map to a different node. Your cache hit rate drops from 95% to near zero in an instant. 100% of requests hit the database. Your database, which was comfortably handling 5% of traffic, now gets 100%.

That is not a gradual degradation. That is an immediate outage caused by adding capacity - the exact opposite of what you were trying to do.

Removing a node is the same problem in reverse. A node goes down, N decreases by one, the formula shifts, keys scatter across different nodes, cache miss rate spikes, database gets hammered.

The problem is not the hash function. The problem is that % N ties every key's location to the total number of servers. Change the total, change everything.

What Consistent Hashing Actually Is

The core insight behind consistent hashing is this: stop tying key locations to the total number of servers. Instead, map both keys and servers onto the same fixed coordinate space, and let keys find their server by position rather than by division.

That coordinate space is a ring.

The Hash Ring

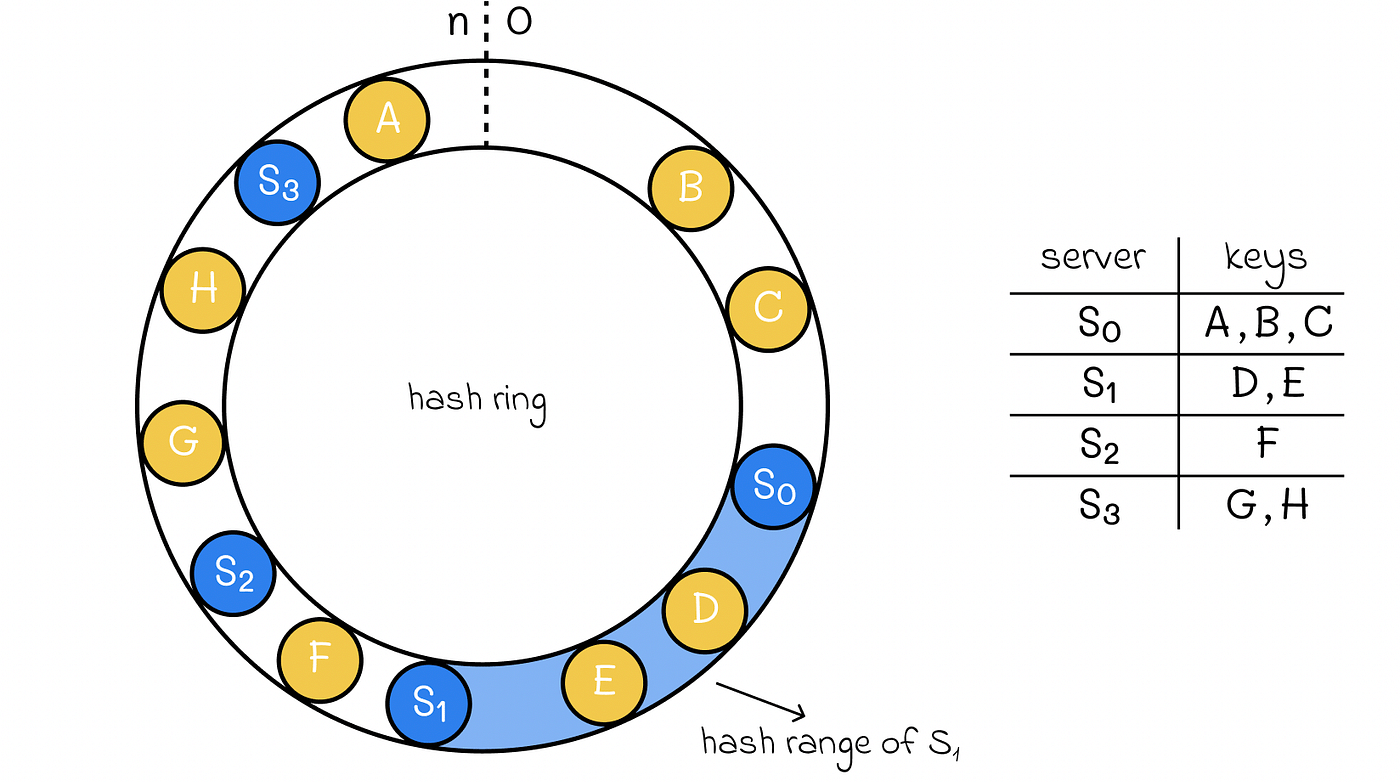

Take a hash function - SHA-1, MurmurHash, it does not matter which for now. That function produces output in a fixed range. For SHA-1 that range is 0 to 2^160 - 1. Imagine bending that range into a circle so that 0 and the maximum value sit next to each other. That circle is the hash ring. Every possible hash value has a position somewhere on this ring.

Nothing about the ring changes when you add or remove servers. The ring is fixed. It is just a coordinate space.

Nodes on the Ring

Each server gets hashed too. You hash something that identifies the server - its IP address, its hostname, its ID - and the result places that server at a position on the ring. Four servers means four positions on the ring. The servers do not sit evenly spaced. They land wherever their hash output places them.

Server A hashes to position 12. Server B to position 37. Server C to position 68. Server D to position 91. Those are their fixed positions on the ring as long as they exist.

How a Key Finds Its Node

When a key comes in, you hash it the same way. The hash gives you a position on the ring. Now walk clockwise from that position until you hit a server. That server owns the key.

Key hashes to position 20. Walk clockwise. First server you hit is Server B at position 37. Server B owns this key.

Key hashes to position 55. Walk clockwise. First server you hit is Server C at position 68. Server C owns this key.

Key hashes to position 95. Walk clockwise. You pass the point where the ring wraps around, and the first server you hit is Server A at position 12. Server A owns this key.

This is the entire lookup mechanism. Hash the key, find its position, walk clockwise, stop at the first server.

Adding a Node

Say you add Server E and it hashes to position 50 on the ring. Server E now sits between Server B at 37 and Server C at 68.

Before Server E existed, every key between position 37 and 68 walked clockwise and landed on Server C. Now keys between position 50 and 68 still land on Server C, but keys between position 37 and 50 now land on Server E instead.

Only the keys that fall in that 37 to 50 range need to move. Everything else stays exactly where it was. Server A, Server B, Server D - none of their keys are affected at all.

Removing a Node

Server C goes down. It sat at position 68. The keys it owned were everything between position 50 and 68 - the range from the previous server to Server C.

With Server C gone, those keys walk clockwise past position 68 and land on Server D at position 91. Only Server C's keys move. Server A, Server B, Server E - untouched.

Why Only a Fraction of Keys Move

With naive hashing and N servers, adding one server reshuffles roughly (N-1)/N of all keys. With 10 servers, adding one moves 90% of keys.

With consistent hashing, adding one server only affects the keys that fall between the new server and its predecessor on the ring. If your servers are reasonably spread across the ring, each server owns roughly 1/N of the key space. Adding one server only touches that 1/N slice - keys that need to move from the server just clockwise of the new arrival.

With 10 servers, adding one moves roughly 1/10 of keys instead of 9/10. With 100 servers, adding one moves roughly 1/100. The more servers you have, the smaller the disruption of adding or removing one.

That is the property that makes consistent hashing useful at scale. Not zero disruption - but disruption proportional to the change, not to the total size of the cluster.

Virtual Nodes

The basic ring works. But it has a problem that only shows up when you think carefully about how servers actually land on the ring.

The Distribution Problem

When you hash server identifiers to place them on the ring, they land wherever the hash function puts them. With a small number of servers, the gaps between them are almost never equal. One server might own 40% of the ring. Another might own 8%. A third might own 25%.

This is a problem because ring ownership directly equals data ownership. The server sitting on 40% of the ring holds 40% of your keys. That server gets 40% of your reads and writes. The server sitting on 8% gets 8%. Your cluster is imbalanced before a single request arrives.

It gets worse when a server goes down. If the server that owned 40% of the ring fails, all of that load shifts to a single neighbour. That neighbour just absorbed a 40% increase in traffic on top of its own existing load. In a system already running near capacity, that kills the next server too. Then its neighbour gets hit. This is how a single node failure cascades into a full cluster outage.

What Virtual Nodes Are

Virtual nodes, usually called vnodes, solve the distribution problem by giving each physical server multiple positions on the ring instead of one.

Instead of hashing Server A's IP once and placing it at position 12, you hash it multiple times using different inputs - "Server-A-1", "Server-A-2", "Server-A-3" and so on - and place each result as a separate point on the ring. Each of these points is a vnode. Each vnode is owned by the same physical server but appears as an independent position on the ring.

With 3 physical servers and 3 vnodes each, you have 9 points on the ring instead of 3. Those 9 points are spread more evenly across the ring, and each physical server owns a collection of small non-contiguous slices rather than one large chunk.

How Vnodes Fix Distribution

With one position per server, Server A owns one continuous arc of the ring. With 10 vnodes, Server A owns 10 small arcs scattered across the ring. The total is roughly the same - about one third of the ring - but instead of one big contiguous block, it is spread out in small pieces interleaved with Server B and Server C's vnodes.

This matters because when Server A goes down, its 10 vnodes are distributed across the ring. Each neighbouring vnode belongs to a different physical server. Server A's load spreads across Server B and Server C roughly equally rather than dumping entirely onto one neighbour.

Adding a server works the same way. Server D joins with 10 vnodes. Each vnode lands in a different part of the ring and takes a small slice from whichever server currently owns that section. The new server pulls evenly from all existing servers rather than stealing a large chunk from one.

The Trade-off

Vnodes improve distribution but they add complexity.

With one position per server, tracking the ring is simple. Four servers, four positions. With vnodes, you are managing hundreds or thousands of positions. The data structure that maps ring positions to physical servers grows significantly. For a cluster of 100 servers with 150 vnodes each, you are tracking 15,000 ring positions.

Membership changes also become more expensive. When a server joins or leaves, you are updating potentially hundreds of positions in the ring metadata rather than one. That metadata needs to be consistent across all nodes in the cluster, which means more coordination overhead.

The other trade-off is that vnodes make replication more complex. With a single position per server, the next N servers clockwise are your replicas. With vnodes, the next N positions might belong to the same physical server, which is useless for replication. Production systems have to skip vnodes that map to servers already holding a replica. That logic adds code and edge cases.

How Many Vnodes Is Enough

The answer depends on cluster size and how much you care about balance versus overhead.

Cassandra uses 256 vnodes per node by default in older versions. More recent versions dropped to 16 because the overhead of 256 vnodes per node in large clusters was causing gossip protocol and repair operation problems that outweighed the distribution benefits.

The general rule: small clusters with fewer than 10 nodes benefit most from more vnodes because you have fewer natural positions on the ring and distribution variance is higher. Large clusters with hundreds of nodes need fewer vnodes per server because the sheer number of servers already provides reasonable distribution.

Most production systems land between 10 and 150 vnodes per physical server. Below 10 and distribution starts getting uneven. Above 150 and the coordination overhead starts showing up in cluster operations.

Replication on the Ring

A single copy of data is a liability. Hardware fails. Disks die. Networks partition. If the only copy of a key lives on one server and that server goes down, that data is gone or unavailable until the server comes back. In a distributed system handling production traffic, that is not acceptable.

Consistent hashing has a natural way to handle replication that fits directly into the ring model.

How Replication Works

When a key lands on its home server - called the coordinator or primary - the system does not stop there. It continues walking clockwise around the ring and writes the same key to the next N-1 servers it encounters. N is your replication factor. The total number of copies of that key across the cluster equals N.

Look at the ring above. S0 owns keys A, B, and C. S1 owns keys D and E. S2 owns key F. S3 owns keys G and H. Each key hashes to a position on the ring and walks clockwise until it hits a server. That server is the primary.

With a replication factor of 3, take key D. It lands on S1 as its primary. The system then walks clockwise from S1 and writes the same key to S2 and S3. Three servers now hold key D. S1, S2, and S3 are the replica set for key D.

The replica set is not chosen manually or configured per key. It falls out automatically from the ring. Wherever a key lands, the next N-1 servers clockwise are always its replicas. This means any node in the cluster can calculate where any key's replicas are just by walking the ring. No central registry needed, no lookup table, no coordinator that needs to be consulted.

Replication Factor

The replication factor is how many copies of each piece of data exist across the cluster. Replication factor 1 means one copy - no redundancy, any node failure loses data. Replication factor 2 means two copies - survive one failure. Replication factor 3 means three copies - survive two simultaneous failures.

Most production systems use a replication factor of 3. With replication factor 2, losing one node leaves you with one copy. If that node also has a problem before the first is restored, you lose data. Replication factor 3 gives you a buffer - you can lose one node entirely and still have two copies while you repair or replace it.

Going above 3 is rarely worth the storage cost for most use cases. The probability of three simultaneous independent failures is low enough that replication factor 3 covers nearly all realistic failure scenarios. Systems handling financial data or medical records sometimes use 5, but that is the exception.

Replication factor also interacts with cluster size. In the diagram above we have 4 servers - S0, S1, S2, S3. A replication factor of 3 means every key is held by 3 out of 4 servers. That still gives you data distribution - S0 does not hold every key - but the redundancy is high. To meaningfully distribute data while maintaining 3 replicas, you need at least 4 nodes. Most recommendations say minimum cluster size should be replication factor plus one.

Node Failure With Replication

S2 goes down. Looking at the ring, S2 sat between S1 and S3 and owned key F as its primary. S2 was also a downstream replica for keys D and E owned by S1.

For key F where S2 was the primary, reads and writes now walk clockwise past S2's position and land on S3, which already holds a replica. No data is lost. No remapping needed. The ring naturally routes requests to the next available copy.

For keys D and E where S2 was a replica - not the primary - S1 still handles reads and writes normally. The cluster is now under-replicated for those keys. It has 2 copies instead of 3. Most systems track this and flag it. When S2 comes back or is replaced, the cluster repairs itself by copying the missing replicas to restore the replication factor.

This self-healing behaviour is one of the reasons consistent hashing pairs well with replication. The ring gives you a deterministic way to find replicas without central coordination. When a node fails, every other node already knows where the replicas are and can take over immediately.

Hinted Handoff

When a replica node is temporarily down and a write comes in for a key it should hold, the coordinator does not just drop the write or wait indefinitely. It stores the write locally with a hint - a note saying "this write belongs on S2, deliver it when S2 comes back." When S2 recovers and rejoins the cluster, the coordinator sends it all the hinted writes it missed during the outage. S2 catches up and the cluster returns to full replication.

This is called hinted handoff. Cassandra uses it. DynamoDB uses a version of it. It is the mechanism that lets these systems stay available for writes even when a replica is temporarily unreachable. The trade-off is that if the coordinator itself fails before delivering the hints, those writes are lost. Hinted handoff is a best-effort durability mechanism, not a guarantee.

The Trade-offs You Need to Know

Consistent hashing solves the remapping problem cleanly. But every design decision has a cost. These are the ones that show up in production and in interviews.

Consistency vs Availability

When you replicate a key across N servers, you immediately face a question: how many of those servers need to acknowledge a write before you tell the client it succeeded? How many need to respond to a read before you trust the answer?

This is where the concepts of write quorum and read quorum come in. If your replication factor is 3, you have three copies of every key. A write quorum of 2 means 2 out of 3 servers must confirm the write before it is considered successful. A read quorum of 2 means 2 out of 3 servers must agree on the value before it is returned to the client.

The rule that makes this useful is W + R > N, where W is write quorum, R is read quorum, and N is replication factor. With N=3, W=2, R=2, you get 2+2=4 which is greater than 3. This guarantees that the set of servers you write to and the set you read from always overlap by at least one server. That overlapping server always has the latest write, so your read always returns the most recent value. This is called strong consistency.

The cost is availability and latency. If you require 2 out of 3 servers to acknowledge every write, and one server is slow or unreachable, every write waits. You can trade consistency for availability by lowering the quorum - W=1, R=1 - but now you can read stale data because your read might hit a replica that has not yet received the latest write.

There is no setting that gives you both strong consistency and full availability during a network partition. That is the CAP theorem, and consistent hashing does not change it. What consistent hashing gives you is a clean way to implement whatever consistency model you choose.

Hotspots

The ring distributes keys based on their hash values. A good hash function spreads keys uniformly across the ring. In theory, each server handles roughly equal load. In practice, load is not just about how many keys a server holds - it is about how often those keys are accessed.

If one key is accessed a million times per second and another is accessed once per day, they are not equivalent even if they hash to the same server. A server that happens to hold several high-traffic keys will be overloaded while its neighbours sit idle. The ring does not know or care about access frequency - it only knows about hash values.

This is a hotspot. It shows up in two situations. The first is genuinely popular data - a trending video, a viral post, a real-time leaderboard. The second is bad key design. If your keys are not evenly distributed in the hash space - for example, if you use sequential IDs as keys and your hash function does not spread them well - you end up with servers that own disproportionately large key ranges.

The standard mitigation is to add randomness to hot keys. Instead of caching a viral video under one key, you cache it under 10 keys with a random suffix - video_4291_1 through video_4291_10 - and distribute reads across all 10. Each key lands on a different server. The load spreads. This works but it adds application-level complexity and means you need to aggregate reads across multiple keys, which increases latency slightly.

Operational Complexity

A consistent hashing ring in production is not a static data structure. Nodes join and leave constantly - planned maintenance, hardware failures, scaling events. Every membership change needs to be propagated to every node in the cluster so they all agree on the current state of the ring.

This is a distributed systems problem in itself. If two nodes have different views of the ring - one thinks Server E exists, another does not - they will route the same key to different servers. You get split reads, inconsistent data, or both.

Most systems that use consistent hashing solve this with a gossip protocol. Each node periodically exchanges ring state with a few random neighbours. Changes propagate through the cluster gradually. It is eventually consistent - there is a brief window after a membership change where different nodes have different views of the ring. In most cases this window is milliseconds to seconds. During that window, requests can be routed incorrectly.

The more nodes in your cluster, the longer changes take to propagate and the more coordination overhead you pay. This is why Cassandra reduced its default vnode count - with hundreds of nodes each running 256 vnodes, gossip messages carrying full ring state were becoming a significant portion of cluster traffic.

When Consistent Hashing Is Overkill

Consistent hashing solves a specific problem: minimising key remapping when cluster membership changes. If your cluster membership rarely changes or the cost of remapping is acceptable, consistent hashing adds complexity without enough benefit to justify it.

A single Redis instance handles millions of operations per second. If your traffic fits comfortably on one node, sharding with consistent hashing adds operational overhead, application complexity, and new failure modes for no gain.

A system with a fixed number of database shards that never changes gets nothing from consistent hashing. If you have 8 shards and you never add or remove shards, hash(key) % 8 works perfectly and is far simpler to operate and debug.

Consistent hashing makes sense when three things are true: your data does not fit on one node, your cluster size changes regularly, and the disruption of remapping keys during those changes has real consequences - either in terms of cache miss rate, rebalancing cost, or availability. If all three are true, consistent hashing is the right tool. If any of them is not, think twice before adding the complexity.

Thanks for supporting this newsletter. Y’all are the best!

Until next time!

Join 1,000+ engineers learning DevOps the hard way

Every week, I share:

How I'd approach problems differently (real projects, real mistakes)

Career moves that actually work (not LinkedIn motivational posts)

Technical deep-dives that change how you think about infrastructure

No fluff. No roadmaps. Just what works when you're building real systems.

👋 Find me on Twitter | Linkedin | Connect 1:1

Thank you for supporting this newsletter.

Y’all are the best.